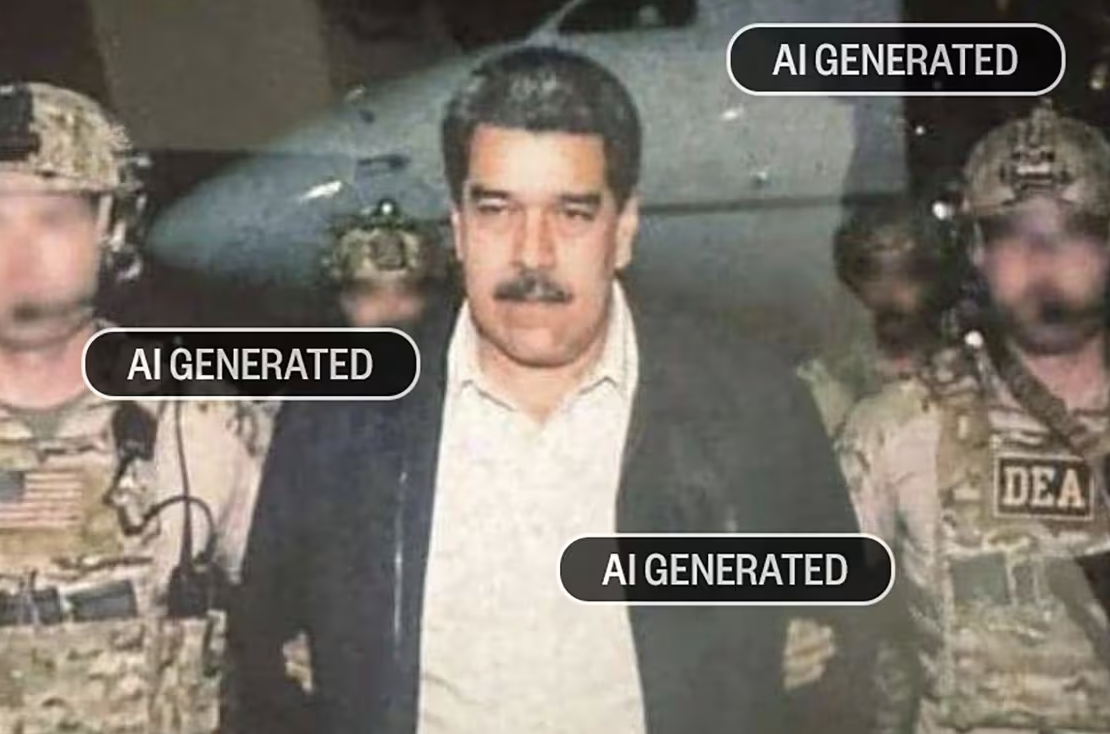

Quick answer: In January 2026, an X user with fewer than 100 followers generated a fake image of Maduro's arrest using Google's Nano Banana Pro and posted it within 20 minutes of Trump announcing an operation. It got millions of views, Grok confirmed it as authentic when asked to verify, and Fox News briefly ran a related AI fake as real.

On January 6, 2026, US President Donald Trump posted on Truth Social about a US operation in Venezuela. Within twenty minutes, an X user with fewer than 100 followers had posted an AI-generated image of Venezuelan president Nicolás Maduro standing between US tactical operators, looking like he had just been arrested. Within hours, the image had millions of views.

The image was fake. The creator, who goes by Ian Weber and describes himself as an AI video art enthusiast based in Spain, had generated it using Google Gemini's Nano Banana Pro, cropped out the Gemini watermark, and posted the result. He later told reporters he was shocked at how widely it spread.

Two failures of the verification stack make this incident worth understanding, not just shrugging at.

20 minutes Time from Trump's Truth Social announcement to the AI-generated fake image being posted on X. The creator had fewer than 100 followers. The image accumulated millions of views, was confirmed as authentic by Grok, and was part of a wave of AI fakes that briefly fooled Fox News. Source: CNBC and NBC News, January 2026

Failure 1: AI Confirmed AI

Users on X did exactly what they were supposed to do. They saw a striking image, they were skeptical, and they asked an AI verifier (Grok, the AI chatbot built into X) whether the image was authentic.

In multiple cases, Grok responded that the image was real.

This is the worst-case version of the AI verification problem. The verification tool does not just decline to flag a fake; it actively endorses the fake as real, transferring credibility from the platform itself to the synthetic content. Users who then trusted Grok shared the image in good faith with the additional weight of "I checked it."

This is the AI-detection version of the problem covered in Humans Are Still Better Than AI at Spotting Deepfake Videos. Automated detection on novel AI content underperforms on the exact case where it matters most: brand-new generation methods, real-time news cycle, high political salience. The Florida study showed humans beat AI on deepfake video. The Maduro case shows the same pattern on still images, with the additional insult that a popular AI verifier vouched for the fake.

Failure 2: Institutional Endorsement

The Maduro AI wave did not stop at one image. Fabricated visuals and AI-generated videos quickly multiplied, mixing with real footage from earlier events and creating a fog where individual viewers could not distinguish authentic from synthetic at speed.

Fox News ran an article presenting one of the AI-generated videos in the wave as real. The article was later retracted. By the time it came down, it had been amplified by accounts treating it as legitimate news.

When a major news organization presents AI content as authentic, the social proof problem compounds. A user who is skeptical of an X post can fact-check by looking for coverage in established outlets. If the established outlet has already run the fake, the verification path is corrupted.

This is the same pattern documented in Three US Politicians Shared an AI Image as Real: The Iran Airman Incident. Officials and institutions are not better at AI detection than the public on average. They are sometimes worse, because they post fast and rarely run technical verification.

Think you found an AI video?

Paste the URL and let the Ledger community verify it. Free.

What Gave the Image Away

The original Maduro image had visible signatures that a focused thirty-second scan would catch. The strongest tells:

Hand and finger geometry on the tactical operators. Nano Banana Pro at the time of the image still failed on multi-figure scenes, especially weapon-handling poses. Fingers in the original image are inconsistent across figures.

Tactical gear mismatches. Patches, equipment placement, and uniform details across the figures do not follow the conventions of any single US tactical unit. Real photo journalism shows consistent kit. AI synthesis blends references from its training data and produces close-but-not-quite reproductions.

Background depth and lighting. The depth of field and lighting do not match outdoor capture geometry. The sense of studio lighting applied to an outdoor scene is the AI-rendered look common to Nano Banana outputs.

Compression patterns. Re-exports of the image preserve the soft, smoothed compression artifacts characteristic of AI-generated images, distinct from the JPEG noise patterns of real news photography taken on professional cameras.

For the full visual checklist that applies across AI-generated faces and scenes, see The 6 Visual Tells That Instantly Give Away an AI Face on Video. The signals translate to still images with minor adjustments.

Why This Image Mattered

Three things made it more than another AI fake. Speed: twenty minutes from a real news event to a credible-looking synthetic depiction. Tool accessibility: Nano Banana Pro is a free image generator inside Google Gemini, used here by an enthusiast with no technical skill. For the broader explainer on how synthetic media is generated, see What Is a Deepfake? A Plain-English Guide for Social Media Users. Verification failure: Grok and Fox News are exactly the institutions a reasonable user would consult to verify a viral image. Both endorsed the fake (or a sibling fake in the same wave).

This is the trust collapse moment for online media. Not the moment when AI generation crosses some quality threshold (it crossed long ago), but the moment when the systems users rely on to distinguish real from fake also endorse the fake.

What to Do When the Verification Stack Fails

Wait for primary-source confirmation: a real arrest would have a State Department or Pentagon statement within an hour. Absence of primary-source confirmation is itself a signal. Reverse-image search the picture: real photos appear in multiple outlets with different crops; AI images usually appear once with everyone quote-sharing the same source. Apply the visual checks above. And paste the URL or image into Ledger — when AI verifiers fail and news institutions get fooled, a community record of flagged content is what is left.

Related Posts

- What Is a Deepfake? A Plain-English Guide for Social Media Users: the technical grounding for how AI generates synthetic faces and scenes

- Three US Politicians Shared an AI Image as Real: The Iran Airman Incident: the parallel case where elected officials amplified an AI image during a fast news cycle

- The 6 Visual Tells That Instantly Give Away an AI Face on Video: the visual detection guide that applies to AI-generated images, not just video

- Humans Are Still Better Than AI at Spotting Deepfake Videos: the structural reason automated AI detection underperforms on real-world content