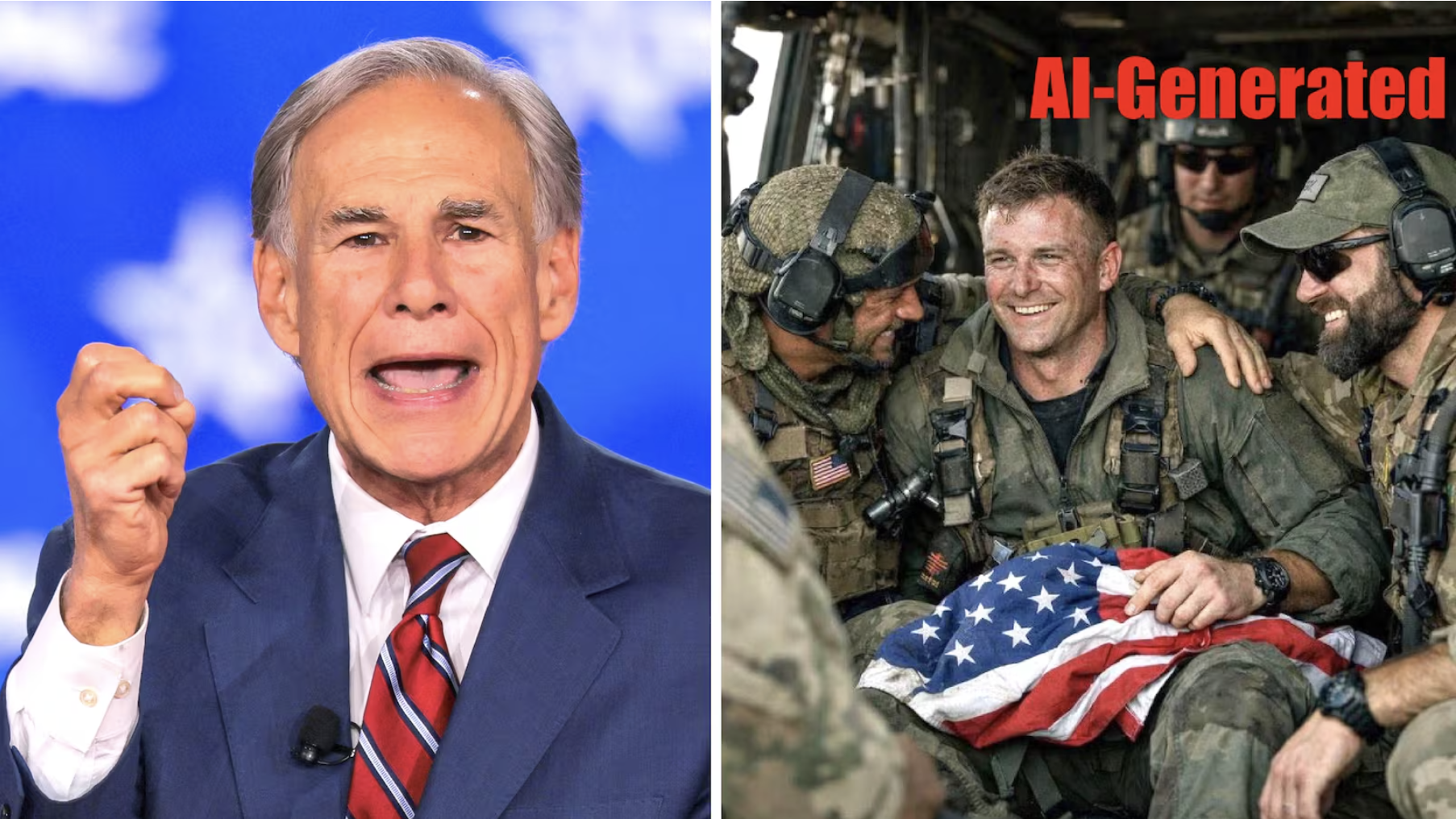

Quick answer: Texas Governor Greg Abbott, AG Ken Paxton, and Rep. Mike Lawler shared an AI-generated image of a US airman rescued in Iran on April 6, 2026. The image had three visual tells: an extra finger on the airman's hand, a uniformly blurred background, and American flag stripes that did not fold naturally. Hive Moderation rated it 99.9 percent synthetic.

On April 6, 2026, three elected Republican officials shared an image on social media that appeared to show a US airman being rescued after a downed aircraft incident in Iran. The image was AI-generated. It had an extra finger on the airman's hand, a uniformly blurred background, and American flag stripes that did not fold the way fabric folds.

Texas Governor Greg Abbott, Texas Attorney General Ken Paxton, and New York Representative Mike Lawler all posted it. Abbott and Paxton deleted their posts after Community Notes flagged the image as likely AI-generated. The AI detection service Hive Moderation later rated the image at 99.9 percent probability of synthetic content, according to PolitiFact's fact check and reporting by The Guardian.

This is a small story about an embarrassing moment for three politicians. It is also a larger story about how AI-generated misinformation moves through the information environment when the people sharing it have institutional authority and the image matches what they and their audiences want to be true.

What the Image Showed

The image depicted what appeared to be a US Air Force crew member, apparently reacting with emotion, in the moments after rescue from Iranian territory. It circulated over Easter weekend as reports emerged about US aircraft operating over Iran and a downed-aircraft recovery event. Military.com traced the image's spread across major social platforms before fact-checkers caught up.

The image was not a photograph. It was generated by an AI model, and it had the signatures.

The Visual Tells

A trained eye catches these signatures in seconds. Most viewers, scrolling on a phone, do not.

An extra finger. AI image generators still fail on hands. Fingers merge, split, or appear in the wrong number. In this image, the airman's hand had an extra finger. This is the single most common AI image failure mode in 2026 and the easiest tell to verify.

A uniformly blurred background. The background was soft in a way that did not match the focal clarity of the subject. Real photojournalism, even handheld, does not produce this kind of discontinuity between a sharp foreground and a smooth, feature-poor background. AI models often cannot render a consistent depth of field because they generate each region from appearance patterns rather than simulating optics.

Flag stripes that did not fold naturally. Fabric has physics. The stripes on the American flag in the image did not curve or distort the way cloth distorts when it moves or is held. AI models do not simulate cloth physics. They imitate the appearance of fabric from training images, and the imitation often breaks along edges and folds.

Any one of these signals, checked for five seconds, would have caught the image.

Why This Spread

The incident is not primarily a story about technical naïveté. It is a story about how AI-generated misinformation exploits three structural features of modern political communication.

Authority as verification substitute. When a governor shares an image, many followers do not question it. The assumption is that someone with access to staff, briefings, and communications infrastructure would not share something fake. This assumption is increasingly wrong. Elected officials post content at the same speed as everyone else, from the same consumer apps, with the same cognitive shortcuts.

Emotional alignment. The image matched what Abbott, Paxton, and Lawler wanted to show their audiences: a successful rescue, American resilience, a hero moment. When an image aligns with what a viewer already believes or hopes for, the brain processes it more quickly and applies less critical scrutiny. This is true of all viewers, not just politicians. Public officials are a high-visibility case of a universal pattern.

Speed as a premium. In a fast news cycle around a military event, the first officials to share a compelling image gain engagement. No incentive structure rewards waiting to verify. The combination means the first unverified version often goes viral before any verified content arrives.

The Abbott Pattern

This is not the first time in the last month that Greg Abbott has shared fabricated content related to the Iran conflict. A month earlier, he shared what he believed was genuine footage of an Iranian aircraft being shot down by a US warship. The clip was captured gameplay from War Thunder, a combat flight simulator video game.

Two incidents in thirty days is a pattern. The pattern suggests that the operational reality of a governor's communications workflow, with staff sharing clips they encountered online and the governor amplifying staff, is not equipped to verify synthetic content in real time. The gaps that allow a War Thunder clip to be shared as real Iran footage are the same gaps that allow an AI-generated airman image to be shared as a rescue photograph.

This is not an Abbott-specific problem. It is an information-environment problem that is easy to document when it affects officials who post frequently and at scale.

Think you found an AI video?

Paste the URL and let the Ledger community verify it. Free.

How to Spot an AI-Generated News Image

The airman image had classic signatures that appear across most current AI image generators. Check hands and fingers first (fused joints, identical nails) — the most reliable tell in 2026. Check the background for uniformly blurry or uncannily sharp regions that don't match the subject's depth of field. Check fabric and hair for cloth that doesn't fold to physics. Check any visible text (unit patches, signage) for garbled letters. Reverse-image search before you share: an AI image with no real origin is itself a signal.

A full visual detection guide for AI faces in both photos and video is at The 6 Visual Tells That Instantly Give Away an AI Face on Video. A pre-share checklist for any video or image is at How to Verify a Video Before Sharing.

The pattern won't stop on its own. AI generation is free, the political cycle is active, and platform verification is slow. The line of defense is your eye, your verification habits, and a community record. Paste a suspicious URL into Ledger to see what others have already flagged.

Related Posts

- The 6 Visual Tells That Instantly Give Away an AI Face on Video: the visual detection guide for AI-generated images and video

- AI Deepfakes in the 2026 Midterms: How to Spot a Fake Political Ad: the broader political deepfake landscape in the 2026 election cycle

- The AI-Generated MAGA Influencer Who Fooled Millions: how AI personas are built and monetized using the same trust exploits

- How to Verify a Video Before You Share It: A 5-Minute Check: the pre-share checklist for avoiding this pattern