Quick answer: A Reddit operator just shared how they built a fake AI Instagram model from scratch, funneled men to a Fanvue paywall through bio links, and pulled in over $80,000 in a single week before Instagram banned the account. This post breaks down the exact playbook, the tells that give these accounts away, and how to spot one before you pay.

In May 2026, an operator running a fake AI-generated Instagram model posted a celebratory Reddit thread describing exactly how they did it. The account was their fourth in a series. The first three had already generated enough money for them to quit their day job. The fourth, by their own account, hit $80,000 in a single week before Instagram took it down.

The operator wrote the thread themselves and laid out the full playbook in plain language. The mechanics they described are running on Instagram, TikTok, X, and Fanvue right now, against real people who think they are paying a real woman for content. This post breaks down what they did, what makes it work, the six tells that give these accounts away, and what to do if you suspect one.

$80,000+ Pulled in by a single AI Instagram account in one week, according to the operator's own Reddit post. The account was their fourth; the first three had already generated enough income to "quit my day job." Source: Reddit operator post, May 2026

The Playbook, in the Operator's Own Words

The Reddit thread describes a five-step system. Stripped of the celebratory framing, it reads as a textbook fraud-farm pipeline.

1. Build the AI character from scratch. The operator picks a niche (specific look, ethnicity, body type, vibe), then generates the model using image generation tools. The character is consistent across photos because the same prompt template plus seed control produces variations of the same synthetic person.

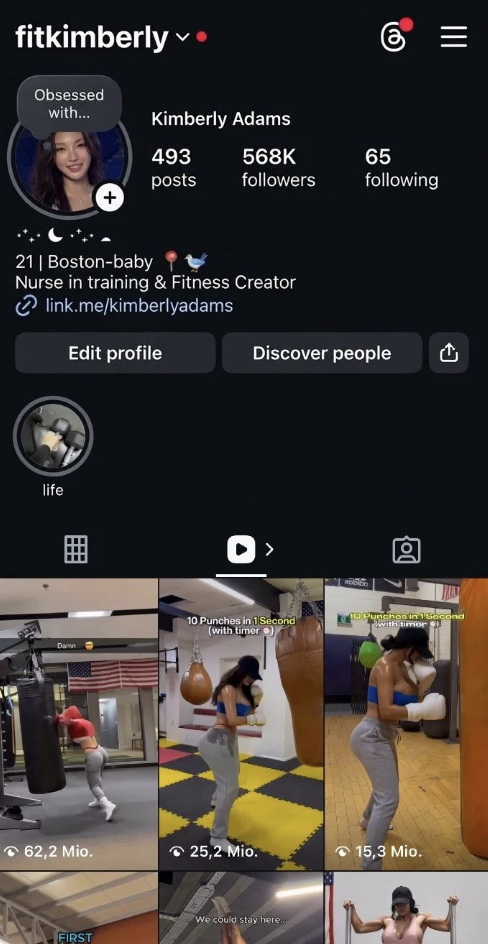

2. Post teaser content only on Instagram. Instagram's terms ban explicit content, so the public-facing posts are deliberately just-suggestive-enough. The operator describes them as "the hottest, most addictive photos and short videos that left guys wanting more." The posts are designed to drive clicks to the bio link, not to sit on the platform.

3. Funnel to Fanvue via bio link. Fanvue is a Patreon-style platform that allows the explicit content Instagram does not. The bio link and story links push followers to the Fanvue page where the operator charges for subscriptions, custom content, and chats.

4. Sell subscriptions plus paid chats and custom content. Once a follower pays, they get access to a content library, plus the option to message the "model." The chat side is what extracts the highest per-customer revenue, and language model workflows let one operator keep dozens of those conversations going at once.

5. Scale across multiple accounts in parallel. This was account number four. The pattern is serial: operate until Instagram bans, then spin up new accounts from the same template. Once the funnel is set up, the conversion runs on its own. That is the structural feature that makes this fraud category profitable at scale.

Why It Works

The mechanics that made the scam profitable in 2025 are now cheap and accessible enough that any operator with $50 of generation credits and a free Instagram account can run the same playbook.

The synthetic character is consistent across photos. Earlier image generation produced different-looking faces from different prompts. Modern tools allow seed-locking, character LoRAs, and persona libraries that keep the same "person" appearing across hundreds of generated images. This is what makes a viewer scroll through the account and conclude that one real woman is posting all of this.

Conversation runs at scale via language models. A single operator with an LLM-driven chat workflow can maintain the illusion of personal attention across hundreds of paying customers simultaneously. The customer feels like they are talking to a specific person who remembers them. They are talking to a model that re-reads the previous conversation each time it answers.

Platform incentives are misaligned. Instagram bans accounts for explicit content but does not ban accounts for being AI-generated. Fanvue does not categorically ban AI-generated personas the way Instagram bans explicit content, so the operator's Fanvue page often survives even after the Instagram ban. Neither platform's rules close the loop.

Customer recourse is weak. When the Instagram account is banned, the customer who paid Fanvue subscriptions through the bio link still has a Fanvue subscription. The Fanvue side keeps running. The customer rarely thinks of themselves as a fraud victim because they got "what they paid for." Many never find out the persona was synthetic.

For the broader explainer on how AI generates synthetic people from text prompts, see the pillar guide on what a deepfake actually is. The same image generation models powering the political deepfake of the AI MAGA influencer Emily Hart are powering this fraud category.

Think you found an AI video?

Paste the URL and let the Ledger community verify it. Free.

Six Tells of an AI Instagram Model

These show up consistently across documented AI Instagram model accounts in 2025 and 2026.

1. The photo set is too consistent. Real people have lighting variation, off-day photos, awkward angles, blurry shots, group photos, and changing weight or hairstyle over months of posts. AI-generated personas have a remarkably consistent look across every photo: same lighting style, same angles, same flattering pose vocabulary.

2. Hands are wrong on close inspection. Modern image generation handles faces well. Hands still fail in subtle ways: extra or fused fingers, asymmetric proportions, jewelry that doesn't track across photos, fingernails that change shape between shots. Zoom in on hands.

3. No real-life context appears in the photos. No photos at family dinners, no photos with friends, no photos at recognizable real locations, no photos in real-world contexts that would be hard to generate. The persona exists only in studio-lit single-subject shots.

4. The bio leads off-platform fast. The bio link goes directly to Fanvue, OnlyFans, Patreon, or a personal website that funnels to one of those. Real personal Instagram accounts rarely lead with a paywall.

5. Engagement is shallow. Comments are mostly emojis and short praise. The account responds to followers in short, generic replies that read like template output. There are few back-and-forth conversations of the kind real personal accounts naturally accumulate.

6. Reverse image search returns nothing or matches synthetic-image catalogs. Drop the photos into Google Images or TinEye. Real people's photos show up tagged elsewhere on the web (events, school yearbooks, friends' tagged photos). AI-generated photos either return zero matches or match other AI-content sites.

For the broader visual-tells framework that applies to any AI-generated face or video, see the 6 visual tells that instantly give away an AI face. The six tells above are the Instagram-context application of those principles.

What to Do If You Suspect One

Three steps in order.

Stop paying immediately. Cancel the Fanvue or OnlyFans subscription before any more money moves. The platform refund policy varies, but stopping the bleed is the first step regardless.

Document the operator pattern. Take screenshots of the Instagram account, the bio link target, the Fanvue page, and any photos you suspect are AI-generated. Save the screenshots somewhere offline. The operator will likely delete the account and rebuild under a new handle once they realize they are flagged. Documentation persists.

Report to the platforms. On Instagram, report the account under "False Information" and "Impersonation." On Fanvue, report under "Synthetic content not disclosed" if that flag exists, or under "Misrepresentation." Reports do not always result in immediate bans, but they feed pattern data the platforms eventually act on.

What Ledger Does Differently

Platform takedown removes one Instagram account. The operator who posted the Reddit thread described losing the $80K account when Instagram banned it, and immediately starting the next one. The platforms close accounts. They do not close operators.

A community-built record of flagged AI accounts persists across platform takedowns. When Ledger users flag a synthetic Instagram account, the flag stays attached to the operator pattern: the photo set, the writing voice, the bio link target. When the same operator spins up a new account from the same template, the cumulative flag history is searchable.

If you came here wanting to verify an Instagram account, that is exactly what Ledger is for. Paste the URL or @username into the free AI video detector. Free, no signup, no fees.

If you want to help build the record, join the iOS or Android waitlist and be among the first to flag accounts when the app ships.

Related Posts

- What Is a Deepfake? A Plain-English Guide for Social Media Users: the technical foundation that explains how AI generates consistent synthetic personas across hundreds of photos

- Emily Hart, the AI MAGA Influencer Who Fooled Millions: the political-influencer parallel where the same image-generation playbook ran for political reach instead of paywall revenue

- AI Is Cloning Your Voice and Face From YouTube to Sell Scams. Here Is What to Do.: the commercial-likeness theft variant where stolen real-person photos drive the same fraud farms

- Deepfake Romance Scams Cost Americans $1.1B in 2025. Here Is How to Spot One.: the dating-app parallel where the same AI persona infrastructure runs against romantic-relationship targets instead of subscription customers